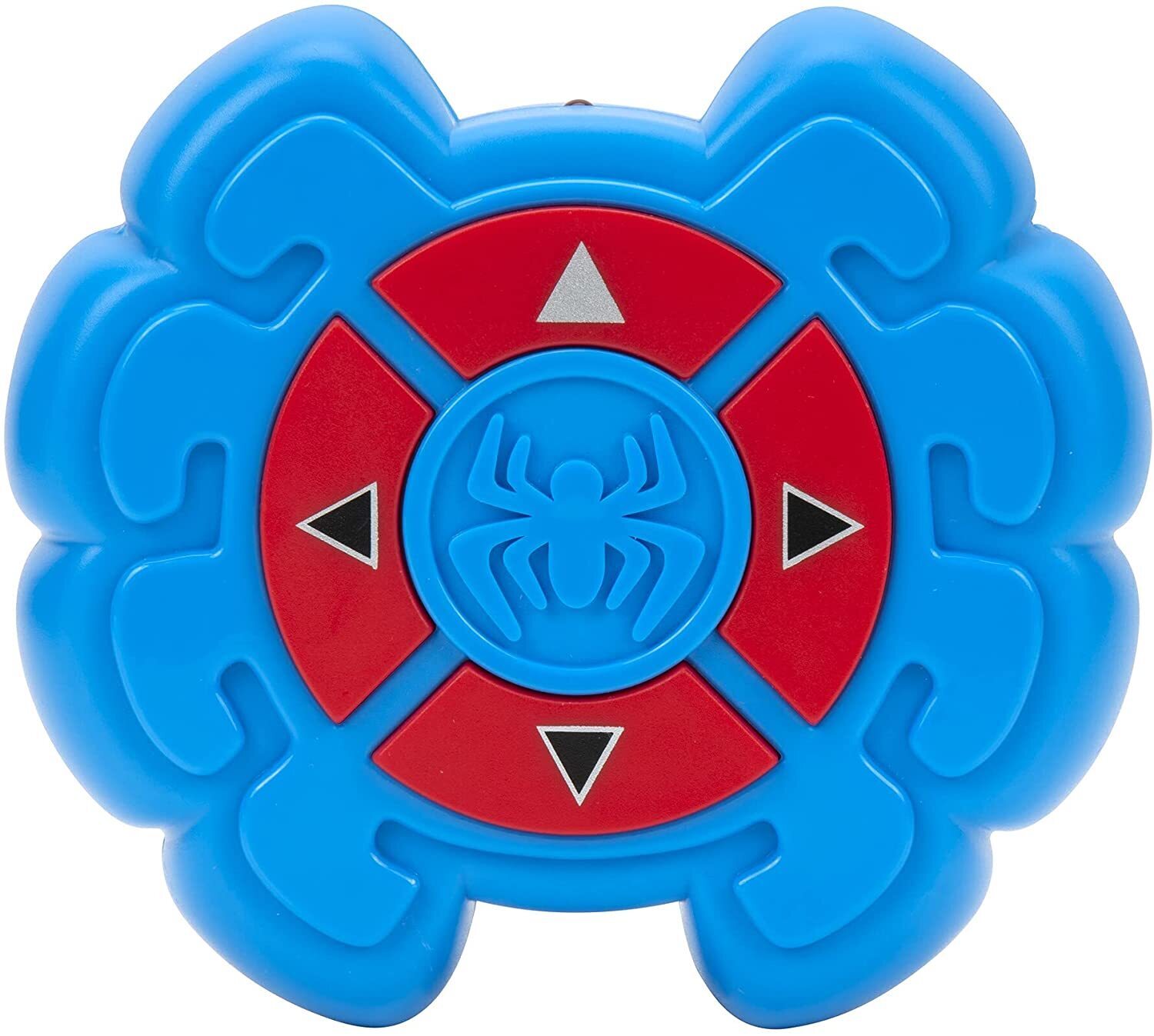

You can know the exact information based on the information provided in “Object Parts”. Some files are pre-supported, some are not. It is static and its moderation, adaptation for mostly SLA/SLS (Resin) 3D Printer. If your counter value is always 1, then you are still running synchronously.Spider-Man Wall Crawler STL Files for 3D printing is a pack of STL files.

Then run a thread that prints the counter every second. Each time your crawler finishes a request, decrement the counter. Each time your crawler starts a request increment the counter. To test this, create a global object and store a counter on it. You may have set the env variables correctly but your crawler is written in a way that is processing requests for urls synchronously instead of submitting them to a queue. This may be because you are missing some setup or configuration that would make it so. Your crawler is not actually multi-threaded.I can't imagine there is much CPU work going on in the crawling process so i doubt it's a GIL issue.Įxcluding GIL as an option there are two possibilities here: It sounds like you've already tried this so this is probably not news to you but make sure you have configured the CONCURRENT_REQUESTS and REACTOR_THREADPOOL_MAXSIZE. To get multi-threaded behavior you need to configure your application differently or write code that makes it behave mulit-threaded. It looks like Scrapy Crawlers themselves are single-threaded. Taking a stab at an answer here with no experience of the libraries.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed